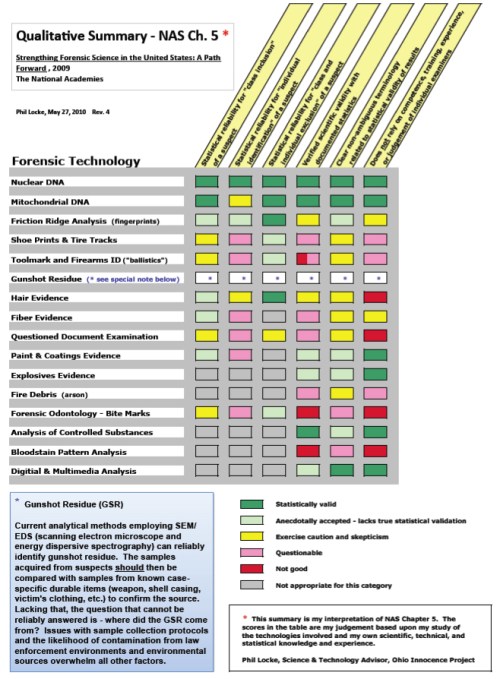

In 2009, The National Academies of Science of the United States published it’s Congressionally commissioned report: “Strengthening Forensic Science in the United States – A Path Forward.” Chapter 5 of the report presents a review of a number of forensic disciplines and their shortcomings. A qualitative summary (by this author) of Chapter 5 findings is presented in the following chart:

I have “graded” each of the forensic disciplines on the following attributes:

1) Statistical reliability for “class inclusion” of a suspect

2) Statistical reliability for “individual identification” of a suspect.

3) Statistical reliability for “class and individual exclusion” of a suspect.

4) Verified scientific validity with documented statistics.

5) Clear non-ambiguous terminology related to statistical validity of results.

6) Does not rely on competence, training, experience, or judgement of individual examiners.

Of great concern is all the red, pink, and yellow on the chart. Here is a link to a downloadable (and legible) copy of the chart:

The National Academies were given the Congressional “charge” in the Fall of 2005 to investigate and report on the state of forensics in the US. By the Fall of 2006, a panel of 52 scientists, academics, and experts had been assembled, and started work. Two and a half years later, and after exhaustive review of all findings, the report was published. What it had to say about forensics in the US (and by extension, the world) was not very good. In summary, what they found was that forensics (with the exception of DNA) lacks scientific rigor and statistical validation.

It can be said that every forensic discipline (with the exception of DNA) fails the test of “show me the data from which I can compute a probability of occurrence.” This is, of course, echoed in the Daubert doctrine’s “no known error rate”.

Has this lack of scientific rigor and statistical validity led to wrongful convictions? ABSOLUTELY.

But …. more about the validity of forensics in future posts.

Pingback: False Confessions - New Website on Wrongful Convictions in the US and Abroad | False Confessions Blog

and sometimes the “experts” aren’t experts at all. they invent credentials & degrees, create their own private brand of junk science, & the prosecutor considers them “personal friends” – under oath, even! god help us all!

Pingback: The (Sorry) State of Forensics in the US [and perhaps the world] | Forensic Science in North Carolina

Pingback: Blood Spatter — Evidence? | Wrongful Convictions Blog

Pingback: By a Hair’s Breadth — Hair Analysis Evidence | Wrongful Convictions Blog

Pingback: About Bite Mark Evidence – Forensic Odontology | Wrongful Convictions Blog

Pingback: Why I Think the US Justice System is Broken – and Why It’s Not Getting Fixed | Wrongful Convictions Blog

Pingback: Hair Analysis Evidence About to Join CBLA as “Junk Science” | Wrongful Convictions Blog

Pingback: The NAS Report – Aftermath | Wrongful Convictions Blog

Pingback: NSF to Support Forensics Research | Wrongful Convictions Blog

Everything said was very logical. But, think

about this, what if you added a little information?

I am not saying your content isn’t solid, however what if you added something that grabbed a person’s

attention? I mean The (Sorry) State of Forensics in the US [and perhaps the world] | Wrongful Convictions Blog is kinda

boring. You might look at Yahoo’s front page and note how they create article headlines to get viewers to open the links. You might add a video or a pic or two to get readers excited about everything’ve written.

Just my opinion, it would bring your posts

a little livelier.

Thanks for finally writing about >The (Sorry) State of Forensics in the US [and perhaps the world] | Wrongful Convictions Blog <Liked it!

Pingback: Justice and Science — “Houston, We Have a Problem!” | Wrongful Convictions Blog

Hello there! This article couldn’t be written any better!

Reading through this article reminds me of my previous roommate!

He constantly kept talking about this. I’ll forward this information

to him. Fairly certain he’s going to have a

good read. Thanks for sharing!

Pingback: Progress on the Road to Valid, Reliable Forensics | Wrongful Convictions Blog

Awesome post.